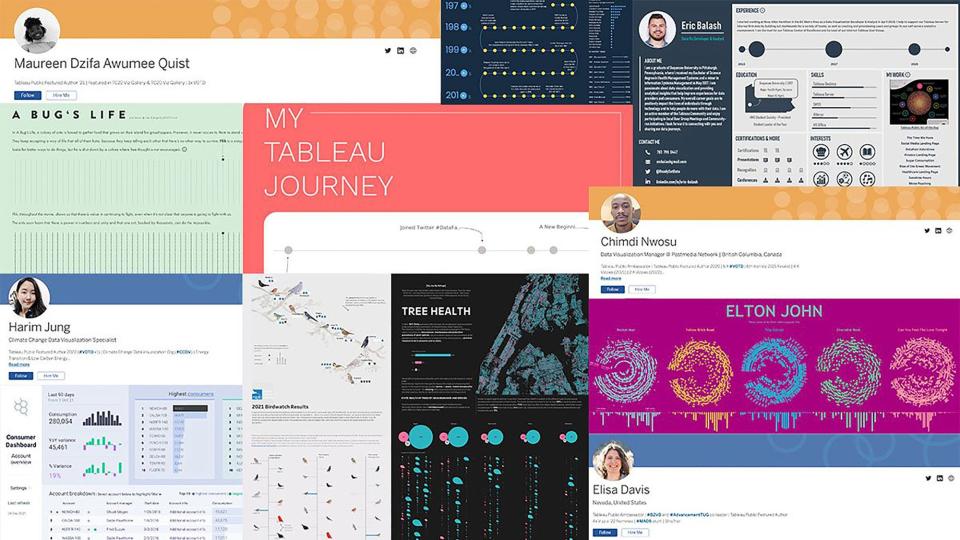

The Tableau Public Viz Gallery at Tableau Conference 2024

Explore the 2024 Viz Gallery featuring 31 captivating vizzes from global creators.Subscribe to our blog

Get the latest Tableau updates in your inbox.

Olivia DiGiacomo

Olivia DiGiacomo

Tableau Public team

Tableau Public team

Emily Kund

May 10, 2024

Emily Kund

May 10, 2024

Britt Staniar

April 30, 2024

Britt Staniar

April 30, 2024

Kate VanDerAa

April 29, 2024

Kate VanDerAa

April 29, 2024

Danika Harrod

April 22, 2024

Danika Harrod

April 22, 2024

Tableau Public team

April 22, 2024

Tableau Public team

April 22, 2024

Danika Harrod

April 15, 2024

Danika Harrod

April 15, 2024

Danika Harrod

April 8, 2024

Danika Harrod

April 8, 2024

Larissa Amoroso

March 20, 2024

Larissa Amoroso

March 20, 2024

Kate VanDerAa

March 18, 2024

Kate VanDerAa

March 18, 2024

Tableau Public team

March 5, 2024

Tableau Public team

March 5, 2024

Kate VanDerAa

March 1, 2024

Kate VanDerAa

March 1, 2024

Sarah Molina

February 15, 2024

Sarah Molina

February 15, 2024

Get the latest Tableau updates in your inbox.