Fraud risk expert assesses data climate in government offices

This is part two of a two-part conversation with Linda Miller, a principal at Grant Thornton, where she leads the Fraud Risk Mitigation and Analytics Practice. Miller previously served as as Assistant Director with the Forensic Audits and Investigative Service at GAO for 10 years. She led development of A Framework for Managing Fraud Risks in Federal Programs, the first-ever guidance to help federal program managers proactively manage fraud risk and help prevent fraud. Her efforts led to the passage of the Fraud Reduction and Data Analytics Act.

How has the evolution of technology and data management impacted public sector fraud risk since you started in the field?

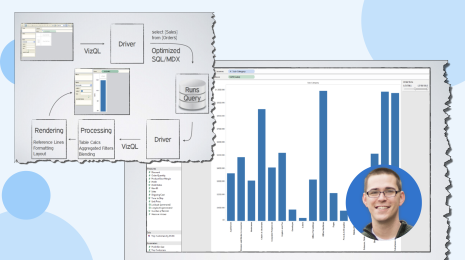

Government is, generally, still in the pretty nascent stages of using data analytics in a meaningful way. Data visualization is mostly just parsing and making sense of data—bringing to life in a visual way relationships and trends that you can’t see in a spreadsheet. That’s still magic to some people.

Two years ago, Grant Thornton built a set of Tableau dashboards for a client on travel and purchase cards. The dashboards were basic—they had different views; for example, we could look at who used a card on holidays, who used a card for transactions with non-apporoved merchants, etc. Then we showed them a dashboard that displayed employees who held more than 12 cards. They said “What? We have employees with more than 12 purchase cards?” They didn’t even know that was happening. No one ever ran an analysis to look at that. This is basic data visualization, but it was novel to them. It’s gratifying to help clients with the most basic visualization tools and see how impactful they can be.

That’s why Tableau is so valuable now to these agencies. It’s a great data visualization platform, and they’re just starting with it and learning how valuable it is. The Tableau name comes up all the time. Agencies are now starting to figure out how powerful a tool it can be.

Tell us about the Program Integrity Analytics Solution that you started at Grant Thornton. How does it work?

Fraud analytics can yield a lot of low-hanging fruit. There are three pretty reliable government data sources that make it easy to look for and find fraud:

- Purchase cards/travel cards. This is reliable bank data, and you’ll have fraud in every agency. These are the most basic analytics and I think every single agency should be doing this sort of basic data matching and dashboarding on these data sources.

- Payroll data. These data sources are usually pretty clean and you can identify things like ghost employees, timecard padding, etc., quickly and easily.

- Procurement data. Whether it’s a contractor or some other type of vendor, assuming the data is relatively cleansed, agencies can easily build tests to find shell company schemes and identify trends that indicate billing irregularities, etc.

Grant Thornton has built a set of test scripts for those three use cases that automatically provide dashboards and results. We also just built a new workers’ compensation module..

The analytics tests are built in SQL and use Tableau out-of-the-box for dashboards. An agency can come to us, and instead of somebody going through all purchase card transactions every month, they can just run it through the Tableau analytics platform. Agencies can choose one or all of the scripts and run a monthly or bimonthly report, and they can tailor the test scripts to their particular functions.

Most agencies have stable payroll and vendor data. It might take an hour or two for us to cleanse that data. Some agencies send data to us. Some are hesitant to send data, but we can work on their servers, or work on a laptop in their environment and build a dashboard there.

For example, if your agency wants to know if there are shell companies, you can compare vendor addresses to employee home addresses. It’s really simple to display that in a Tableau dashboard. You could also match vendors that are in the same apartment building as employees, which would be a red flag for further investigation. We can also match vendor addresses to postal service addresses with geographic tools in Tableau to look for ones that are P.O. boxes, and not a business address.

If every major agency did those three analyses, we’d be in such a better place. Most agencies should be able to do them with little problem. These are simple fraud tests using just a couple of databases and it could save millions of dollars.

It’s frustrating that agencies aren’t doing more analyses like these, because it’s so easy to do.

This solution allows for customization and can meet varying levels of analytics maturities. What do government agencies need to have in place before starting with a program like this?

Their data needs to be decent, which is a big part of the challenge. Some agency data can be challenging to cleanse and sort because it’s not being collected consistently. They don’t have a data lake or a centralized repository. That’s one of their biggest hindrances.

We started with those four areas because the data sources are most stable. Most agencies can run basic reports in a month or two. Obviously, bank data is reliable, so that’s a great place to start. It takes very little data cleansing and transforming on our part to be able to use those analytical tools and other dashboards for them.

Other data sources can vary in quality by agency. If an agency wants to customize the solution, it would take more time to cleanse and verify data and build scripts for them, but the return on that investment is very high in almost all cases.

What results are you seeing from your government clients who are using this Tableau solution?

Best practices and outcomes in government are pretty limited so far, but there are pockets of excellence. We recently helped Veterans Affairs by building modules that identified overbilling patterns. They had been looking at bills on a per patient or per bill basis, but not across providers. In isolation, the bills looked fine, but when you looked at all the bills from one provider, patterns emerged. We built scripts that showed anomalies such as the number of doctors who billed more than 24 hours in a day and were able to identify hundreds of cases of overbilling very quickly. We also ran some similar analytics on worker’s comensation data for a locality that allowed us to identify some very troubling trends in claims.

The Federal DATA Act of 2018 created the chief data officer position in federal departments. What impact is this having on federal financial offices?

The CDO movement is really great. It’s going to help agencies a lot. But CDOs are going to run into the same challenges as the private sector who try to help and then find that a lot of data is unreliable and not consolidated in a way that makes it usable. A lot of that has to do with the lack of sharing, even within departments. So, CDOs are awesome, but they probably have five-to-ten years to get to where they can fully analyze the data that’s available today. What can expedite government agencies’ ability to fully analyze data is to start now with a data strategy to address those challenges and to leverage a visual analytics platform to automate much of the work and give much better results.

To learn more about how Tableau can help your organization reduce financial risks and improve outcomes with the power of your data, visit our Public Sector for Finance Analytics page.