Our Journey to Kubernetes — Part 1, Inception

Tableau Online is one of the fastest-growing parts of Tableau. As we experienced rapid growth, our Cloud Engineering teams found it increasingly expensive to keep up with operations, mostly around how we managed infrastructure, deployed our application, and scaled our software in the cloud. Several years back, Tableau Online took a hard look at our growing pains around infrastructure management, deployment, and scaling; we realized we needed to revisit some of our core architecture. We landed on Kubernetes as a way forward.

Change guided by two core principles

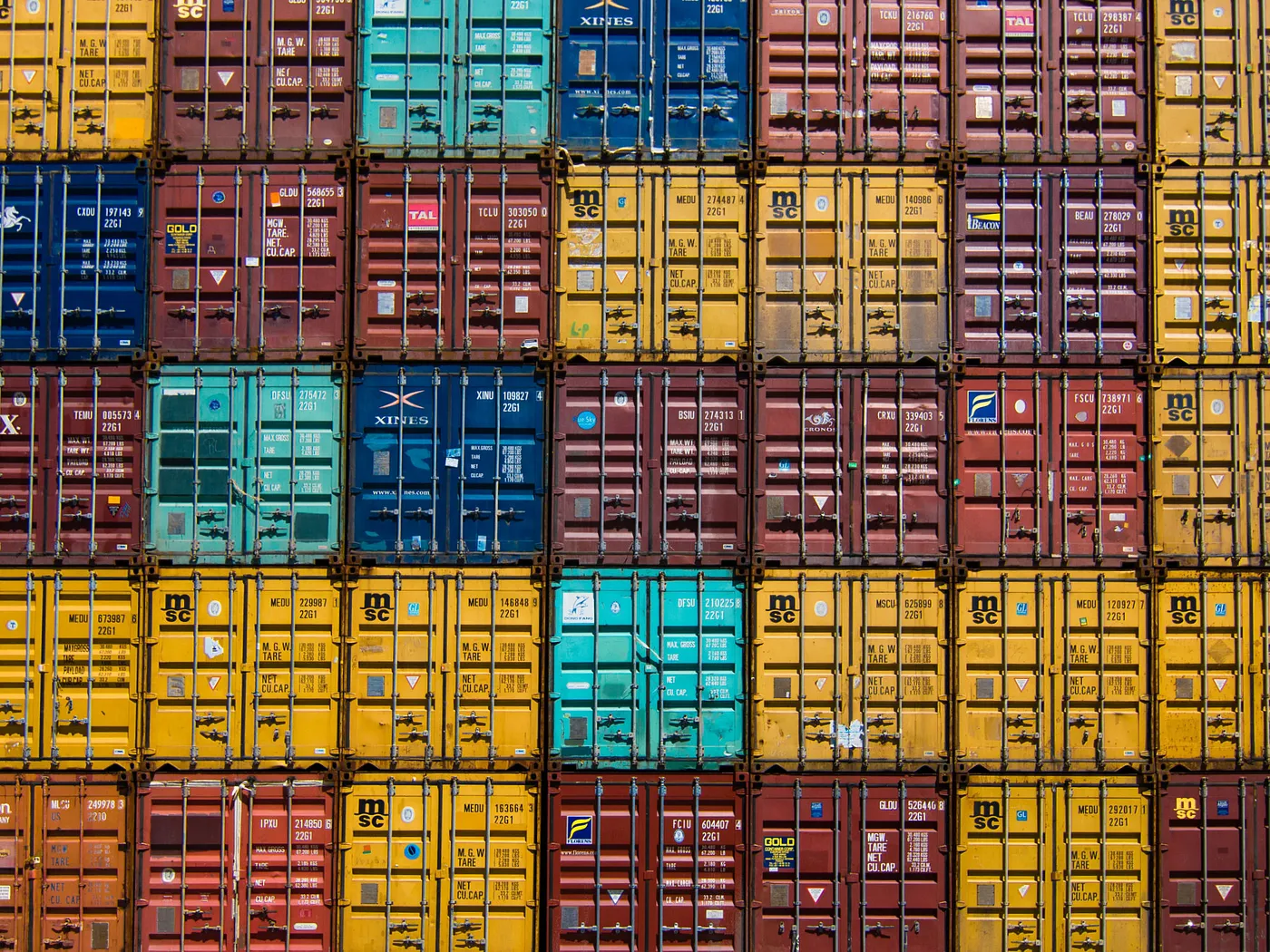

Early on, we decided that any new platform needed to fully embrace Immutable Infrastructure/deployments and Cattle over Pets as core principles.

Immutable infrastructure and deployments help to make deployments safer and more predictable. Containers address portability, with all the runtime and tool dependencies baked in at build time. Instead of upgrading or patching in place, we could safely deploy a new version and switch traffic over to it. Rollback is as simple as rolling forward with an old version.

Cattle over pets means treating infrastructure as interchangeable and automating all aspects of its lifecycle. This is a core tenet of Kubernetes, with worker nodes being nameless and coming and going as needed. We went a step further, including treating our Kubernetes clusters as immutable/cattle as well. We create a new cluster for platform or core service upgrades and redeploy our workloads (similar to a blue/green application deployment).

These principles have paid dividends:

- Testing is more manageable, as we do not have to test upgrade/rollback paths. We have done some major infrastructure upgrades, and we do not fear new versions of Kubernetes/EKS.

- Safe and easy rollback in case of failure, as we can remove the new cluster if it is not working correctly.

- One day we had a problem with networking on a prod cluster. We created a new working cluster, which allowed us to troubleshoot the old cluster without a sense of urgency and panic. (It was DNS, by the way, it’s always DNS ;-)

- We have had several cutover events, like changing logging providers, where we wanted to switch without losing logs or having redundant log entries. Blue/green allows for a seamless cutover.

Problems that drove us to change

Even though Tableau Online was already hosted in the public cloud using AWS, we struggled to achieve the cloud's key benefits. Several years back, Tableau Online took a hard look at our growing pains around infrastructure management, deployment, and scaling; we realized we needed to revisit some of our core architecture.

Our online infrastructure was static and rigid. We required manual intervention to scale or respond to issues. Our average response time took days rather than minutes. Our deployment orchestration was complicated and hard to change. We struggled with repeatability and flaky deployments. We had issues with rollback; it was difficult and error-prone. We could not support advanced deployment scenarios for rolling, canary, blue/green deployment strategies. We had inefficient use of resources because experimentation was difficult and expensive.

We found that developers were struggling to understand how to deploy Online with our proprietary tools. Our tools utilized the same deployment mechanism we use in on-premise/self-hosted installations while attempting to address cloud-scale, multi-tenant operational challenges.

We were at a crossroads. Should we maintain the course and improve our existing tools? We had a high sunk cost in our existing tooling and lots of blood, sweat, and tears! We had done a quick estimate calculation on how long it would take for us to meet our growing list of requirements and determined it would take 2–3 years for our feature team to meet just the basic needs. But if we forged a new path, should we deviate Tableau Online from how we deploy to on-premise/self-hosted environments?

Why Kubernetes?

At the time, Kubernetes was a relatively new platform but was gaining rapid traction in the industry. We compared Kubernetes to our proprietary deployment tooling and our list of future requirements. We found Kubernetes came out way ahead on paper, based on these capabilities:

- Support for side by side rolling or blue/green deployments. Rollback and roll forward were straightforward.

- Kubernetes supports loose coupling between applications and the infrastructure it runs on. We can have a standard pool of workers that scale up or down to meet deployed service needs. This results in less infrastructure to manage and allows for rapid experimentation.

- Multiple tools were available like kubectl, Helm, Prometheus that have documentation and training available.

- Built-in capabilities for auto-scaling.

- Built-in support for controls (RBAC) and auditing (events and logs).

The value of containers and Kubernetes as an orchestration layer, if we could make it work with our Tableau software, was crystal clear.

We decided to do a pilot with containers and Kubernetes to ascertain a viable path forward. We focused on our asynchronous background job runners first, which are lower risk because they have built-in retry and tolerate some failure by design. Focusing on background tasks also allowed us to postpone other issues like service connectivity and ingress, which are complicated in Kubernetes but especially for Tableau Online (more on this in another post).

That was easy, perhaps too easy.

The first effort to run in Kubernetes was deceptively easy. It did not take long to have a successful proof of concept. In relatively short order, we created a container build pipeline, demonstrated automated rollback, advanced deployment strategies, auto-scaling, flexible topology, improved resource utilization, and considerable improvements in operational agility. In one proof of concept over three months, we demonstrated a thin slice from all the capabilities we projected would take two to three years to build out using our proprietary deployment software. After you demo any compelling proof of concept, there is a chorus of people yelling to “Ship it!” Of course, the reality is rarely that easy.

One of the challenges of any platform shift is the vast number of core services that must reach parity with the legacy platform. Enterprise software requires a lot of supporting tools. We needed a new answer for deployment management, infrastructure management, logging, metrics, crash reporting, audit/change controls, security/permissions, service connectivity, and more. These sub-systems faced a fair amount of discovery to understand how they work today in Tableau Online and translate them to the Kubernetes platform without breaking changes.

Learning Curve

The Kubernetes ecosystem introduces several new concepts like helm charts, resource requests/limits, daemon sets, pod disruption budgets, volume management, taints and tolerations, etc. Honestly, this is a lot, and it is overwhelming for most developers. Our team struggled with what level of abstraction to maintain with our client teams. Should we shield other dev teams from Kubernetes specifics? Or should we force teams to learn the details of Kubernetes for more ownership/accountability? We started more with the former mindset (abstract away Kubernetes details) and have slowly shifted more to the latter (teach teams how to work with Kubernetes). Our team discovered our abstractions could not keep up, especially considering the size of our core team. We realized education and outreach allowed more teams at Tableau to operate their services better and create a community of practice. We are even starting to see some early adopters help new teams and services come onboard to Kubernetes without needing our core team’s help.

We have faced our fair share of gotchas specific to running in containers. One example is different containers running in the same pod will likely have overlapping process ids (PID), which broke some of our internal process resource monitoring software that expected each PID to be unique. Some of our software could not use the default network settings, and setting those values through sysctl values took a lot of troubleshooting (do we configure on the host or the container?). We found setting the time zone for a pod/container was more difficult than we imagined. Our code also had to be adjusted to understand available memory in a container setting. We did not initially build Tableau Server to run in a resource governed environment; our next post in the series will go into a lot more detail on our trials and tribulations with memory resource limits.

Stay tuned

We have not converted everything to Kubernetes. There are some critical wins in the parts we have migrated, and we have momentum now. We have broken some of our static dependencies. We have early experiments with auto-scaling (more on this later in the series), and we can respond to scale needs much more quickly. We can experiment with pod topology, density, and resource allocation, both in canary and blue/green scenarios. We are enjoying much more operational agility, and that is just the early results.

This post is just the first step chronicling our journey towards running all of Tableau Online in Kubernetes. Here we only scratch the surface of what motivated us. We will cover resource management and auto-scaling adventures in our next post and service connectivity in our final post.