Consolidating trust in data with Tableau Data Server

Today’s guest blog is from Lorena Vazquez, a Senior Software Engineer at Cboe Global Markets. Lorena is part of the BI and Reporting Engineering team and also serves as the Tableau Server Administrator.

Manager: "I received these revenue numbers via e-mail from someone in accounting, but they don't match the numbers you provided for our quarterly business review. Where did you get your numbers? Why are they different?"

Analyst: "I got the numbers off a spreadsheet that is generated by a report by the IT department. I don't know where accounting got their numbers. But, my numbers are right."

Manager: “We need to get to the bottom of this.”

We've all experienced this story one way or another—as the manager, analyst, or IT department. How do we get to confidence in data that is shared across departments and roles? ? Well, for me, the answer was Tableau Data Server.

What is Data Server, you ask? It’s a component within Tableau Server that allows data sources to be published, shared, and refreshed within a Tableau Server site. In my experience, Tableau Data Server gives us this and more.

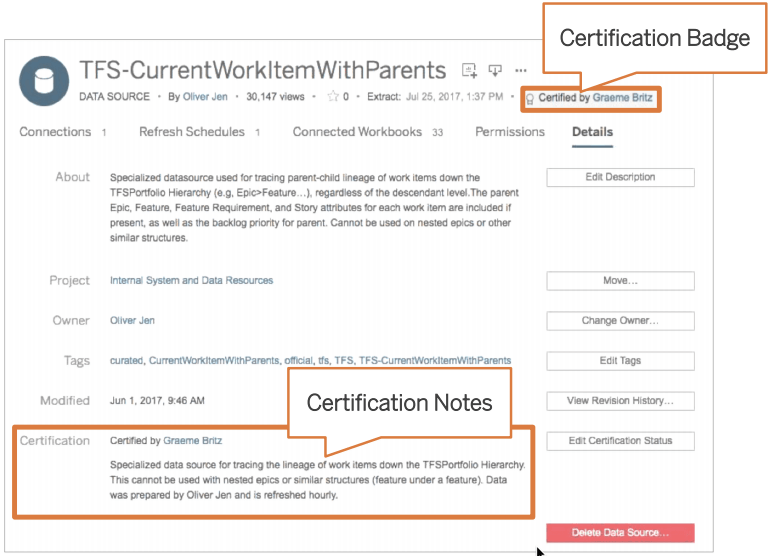

- Tableau Data Server can provide certified, published data sources that remove risk of ambiguity for important data, for example, revenue information.

- Data sources can be refreshed on a schedule. No more worrying about manually updating and republishing data sources to have the latest data. And you can rest assured that if a data source fails, you will be notified.

- More importantly, it is a defined set of dimensions, measures, and calculations that are documented and reusable for future analysis and dashboards.

- For me, Tableau Data Server added one additional benefit: reducing the impact of production database systems. Having data extracts reduced the live queries to our production database systems, thereby providing ease of mind to IT.

How did I get started?

First, I started with a data source. Although you can schedule a data source refresh to add new data, Data Server also works for static data sources. A few use cases of static data are historical data sets that will never be modified and/or exist outside of your database domain. Static data sources are the simplest because we would generate a Tableau extract and publish the extract to Tableau Server.

To refresh data sources, we needed to make sure things were in place before we even published to Server. When we first started, we ran into a few issues that I’ll mention below, but that resulted in creating a development process for new data sources.

Whichever data source you use, you have to make sure that Tableau Server has access to the source. If it is a database server, make sure that Tableau Server can connect to the database (both IP address and port). As I am the Tableau Server Administrator, I was able to test if connectivity existed. I then reached out to our DBA team to ensure I had a proper authentication for Tableau. We ran into a connectivity issue with a data source. After we published the data source and scheduled the refresh, the refresh failed because Server could not connect. We resolved it by contacting the DBA team and they confirmed that the database server was rejecting the connection. Access was granted to Tableau Server and our workflow improved from it.

The second thing to check off the list is to make sure that the database drivers are installed on Tableau Server. You don't need to worry about maintaining various versions of database drivers because you’ll only have one on your server—gone are the days of your support team installing database drivers on each user’s computer in order to access the database. With a published data source on Tableau Server, you just point your users to the data source and they connect through Tableau Server. As a Tableau Server Administrator, I have the control over the data sources and drivers that are used and ensure that they are the correct version that is supported by Tableau Server. Make sure that the correct drivers are also installed in your Desktop users computers. We maintain a list of the database drivers we use and share that with our IT help desk team.

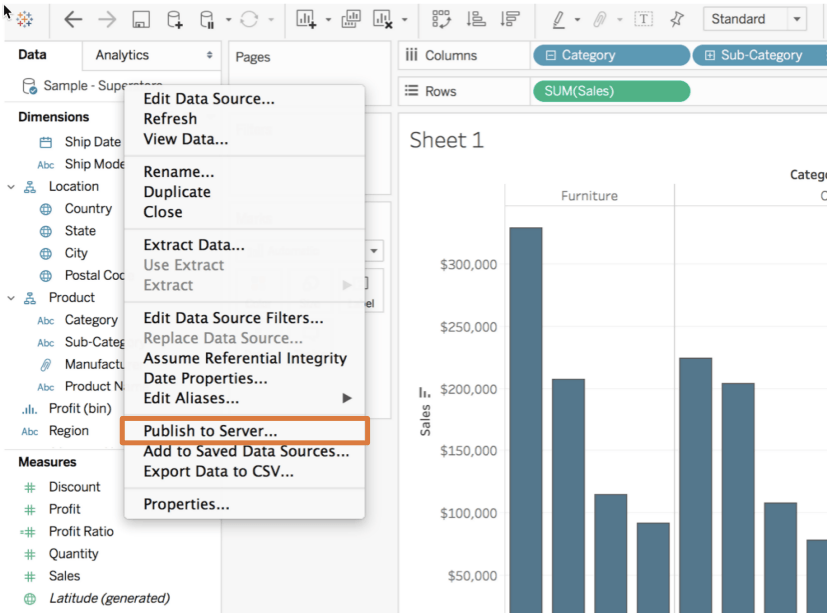

Once you have database connectivity, network connectivity, and/or file share connectivity, you can proceed to publishing the data source to Tableau Server. It may seem like a lot of steps, but the reward for maintaining curated and certified data sources outweighs the one time setup per data source.

Applications in the real world

One of the biggest concerns, even for me, with giving everyone access to data, is Shadow IT. Everyone has their own copies of data, they use different terminology for the same dimension or measure, and it becomes an overall data governance nightmare. With Tableau Data Server, that nightmare goes away and you can sleep better at night. At least, I do.

Let’s talk about how Tableau Data Server provides data governance, including data quality.

Data governance

Back to our story from the beginning. How does Tableau Data Server change the conversation knowing now that we can have curated and certified data sets?

Analyst: "I worked with our team to get that data available on Tableau Server. We don't have to worry about looking at stale data anymore because it is refreshed every day! Even better, accounting stopped using those manual spreadsheets so we’re sourcing their information from the same place."

Manager: "So you mean we won't have different numbers anymore? That's great."

Publishing a data source on Tableau Server provides consistency across everyone accessing that data set. The dimensions and measures are curated, defined, and described for all to see. Tableau Server even allows us to mark such data sources as certified. The pitfalls you can run into with published data sources happen when you don’t do these things. If you publish a data source without descriptions, provide random or nonsensical measure/dimension names, and/or you don’t have a data workflow process in place, you risk causing confusion, mistrust in the data, and an overall nightmare for data management. I’ve experienced this nightmare. We set up published data sources without any descriptions and that caused confusion to the end users.

How did we get around that? We created a workflow process for creating data sources on Tableau Server. This could be as lean or as heavy as you want. But, in my experience, it should meet at least the following:

- Dimensions and measures are well defined to your company’s business language.

- Calculations are named appropriately and include necessary comments. (You don’t want to have “total” as one calculation and another as “total total.”)

- Dimensions include descriptions if the name alone is not sufficient. You can include the source of the data from upstream applications like a website form or order form.

- Mark your data sources as certified once you’ve completed these steps. It signifies to users that this data can be trusted.

Work with your team and your CoE to define what this workflow means for your group. This takes some time to integrate, but understand that this helps overall understanding of the data on Tableau Server.

Data Quality

Part of data governance is data quality. How do you ensure that the data you have is correct? In the story, the analyst mentioned that with the data being on Tableau Server, that data was going to be refreshed daily. Tableau Data Server allows you to define schedules for your extracts at various cadences, including hourly.

A potential pitfall with scheduling a data source is missing data if the data is not available at the time the extract runs. We can take data quality validation one step further by creating a dashboard that queries your Published Data Source and your original data source to compare the total number of records. With Data-Driven Alerts, you can get notified if the data source is out of sync. This is something that I use daily for some of the more critical data sources.

Alternatively, the Tableau Server REST API and Tableau Data Extract Command-Line Utility allows developers to create a “push job” that will refresh data on Tableau Server when data is available on the original data source. Instead of Tableau Server pulling the data from the original database on its own schedule, the push job (outside of the Tableau Server schedule) is dependent on data being populated in the source database before running the refresh data extract job for Tableau Server. This approach works only if you have access to a scheduling program. Work with your development team or data team responsible for loading data into the database to see how you can add this job.

Advocacy

Having all these published data sources and processes in place without sharing this information is wasted effort. The next step is advocating the use of Tableau Server and the published data sources in Data Server. Think about sharing the new data sources as part of a monthly newsletter, hosting training sessions to review new data sources, maybe even a how-to video on using that data source if that works in your organization. Most of all, ask for feedback. Make sure that the users understand the dimensions and measures and the use case for using that data source. The more you engage with your users, the more they will use Tableau Server.

Go forth

Now that you’ve learned a bit more about Data Server, I encourage you to first reach out to your CoE, internal Tableau User Group, or Tableau Ambassadors to find out how you can leverage Tableau Data Server. Think about the processes that you currently have and how you can improve them with Data Server. We are all searching for relevant and valuable data; let’s make sure we only have one source of truth for it.

Learn more in this Tableau Data Server SlideShare.

This post is part of a series where Tableau Community members share their experiences moving from traditional to modern BI. Read more of their thoughts and lessons learned about escaping traditional BI, a framework for governance, deploying modern BI in a traditional environment, and scaling Tableau in the enterprise.