Stop Trying to DIY Your Way to Conversational Analytics

For most organizations, data and analytics remains a cost center—a massive investment in lakes and warehouses that hasn't yet paid its way. Businesses have hired brilliant analysts. Yet, for the average employee, data remains a friction-filled resource.

When a sales leader needs to know why revenue is dipping, they shouldn't have to log a ticket and hope the analyst’s definition of “revenue” matches the CRM. That is a cost. It burns time, it burns money, and it burns momentum.

The promise of conversational analytics—and the agentic AI revolution driving it—is to flip that dynamic on its head. It promises to turn your data into a profit center by lowering the barrier to entry so drastically that anyone can ask questions, get trusted answers, and take action immediately.

The appetite for this is massive. In fact, our State of Data and Analytics Report found that 94% of business leaders say they would perform better if they had direct data access in the programs and apps where they work the most.

Here is where things get messy: As leaders rush to adopt this technology, many confuse a simple interface with a complete solution. They see a chatbot that can answer “How many widgets did we sell?” and think they've solved the problem.

But they haven’t. They’ve just seen the tip of the iceberg. And if you don't understand what lies beneath, you aren't building a profit center—you're building a liability.

The commodity trap: Why NLQ is just the tip of the iceberg

What most people see today is natural language querying (NLQ). This is the ability to ask a factual question like, "How many customers are in California?" and get a direct answer or visualization based on a translation to a SQL query.

Generative AI has advanced so quickly, basic NLQ has become a commodity. You can upload a CSV to a public LLM or have a developer hack together a "chat with data" tool in a weekend Hackathon. It looks impressive on the surface.

But here is the reality check: NLQ is just the tip of the iceberg.

While NLQ handles the "what," it often fails at the "why" and "what next." It struggles with ambiguity and lacks business context. The risk is real—89% of data and analytics leaders say they’ve experienced inaccurate or misleading outputs with AI. And if an agent hallucinates a margin calculation because it doesn't understand your business logic, your team makes bad decisions faster.

A chatbot that guesses is a toy. A system that knows is a business tool. To move from the former to the latter, you need the massive infrastructure submerged beneath the surface.

Context, action, and depth take you beyond a simple chat

True conversational analytics requires significant technical weight “below the waterline” to ensure you can bet your business on the answers. Let’s take a closer look at how these submerged components work together to drive real value.

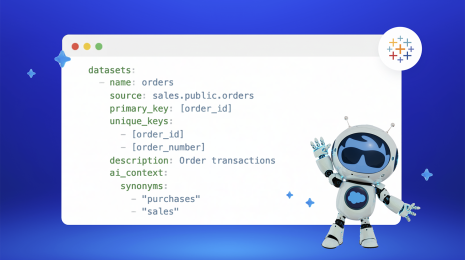

Unified data and semantic rigor

You cannot rely on an LLM to guess your business logic. You need a semantic model—the “source code” of your business understanding—that defines exactly what "profitability" or "churn" means, ensuring AI and employees can all speak your specific business language. The semantic model acts as a translator and guardrail, mapping the natural language request to the precise, governed metric defined by your business. The LLM then narrates the result returned by the model.

Imagine a retail manager asks, "What is our gross margin in EMEA?" A generic bot might calculate this as revenue minus cost of goods sold. However, your business definition of gross margin might specifically exclude shipping costs but include returns. Routing through the semantic layer uses your specific formula every time, ensuring the manager gets the number that matches the CFO’s report, not a generic guess.

Contextual and proactive insights

A great analyst doesn't wait for a ticket; they tap you on the shoulder when something looks off. Your system should proactively monitor your metrics and alert you to trends without being asked.

Consider a supply chain lead. Instead of manually logging in to check inventory levels every morning, an agent proactively alerts them: "Inventory for SKU-123 at the Western Warehouse has dropped below safety stock levels." The insight finds the user before the issue worsens, rather than the user hunting for something to react to.

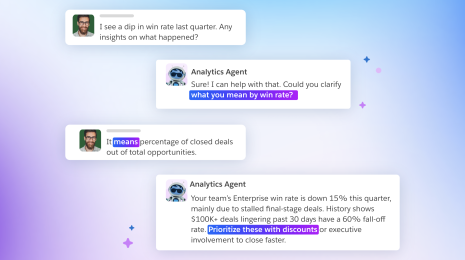

Deep exploration and continuous refinement

Business questions are rarely one-and-done. You need systems that handle complex, multi-step reasoning and predictive analytics while allowing for expert calibration. By testing and verifying responses against real-world questions, analysts can train the agent to improve its accuracy and reliability over time.

Say a marketing director sees a dip in leads and asks, "Why did performance drop last week?" A basic bot may simply confirm the drop happened. A deep exploration agent drills down: "Leads dropped 12% primarily driven by a sharp decrease in paid search traffic in the UK region, despite steady organic traffic."

Integrated actionability

Insights should trigger action. And even better if you can act from the point of insight without the need to swivel-chair between systems. Closing this insight-to-action gap (the “last mile” of business intelligence) is one of the most promising aspects of agentic analytics.

If your analytics agent tells a sales manager that a key deal is stalling, they shouldn't have to leave the chat to fix it. The agent should trigger a pre-built action from within the conversation, like assigning a new task—all without the user ever opening another tab or application.

A shiny toy or an enterprise-ready tool?

Without these elements, you end up with a glorified chatbot, or at best, agents that guess. Ultimately, reliable agent performance—capable of understanding complex business intent—depends entirely on this full structure, constantly underpinned by strong trusted data governance.

A complete solution also allows users to take action at the point of insight within existing workflows, finally reducing the friction in the “last mile” for making faster decisions informed by data. This is the shift: moving from viewing data to doing something with it.

The DIY dilemma: Why "homegrown" solutions often fail

Looking at that list of requirements, you might be tempted to build a conversational analytics solution in-house. But stitching together disparate tools to build this infrastructure creates massive enterprise risk—a classic “cost center trap.”

First, there is the governance void. Security cannot be an afterthought. A DIY solution requires you to build robust, low-level security to prevent prompt hacking and unauthorized access from scratch. Most organizations aren't ready for this; currently, only 43% of data and analytics leaders have established formal data governance frameworks and policies.

Then, consider the maintenance treadmill. Model providers ship updates constantly. If you build this yourself, your most expensive data talent will spend their days monitoring performance, re-jigging prompts, and fixing broken integrations. It turns your data team into full-time mechanics, stuck fixing the plumbing rather than driving strategy.

For more on this topic, check out my discussion on LinkedIn.

Stop building, and start solving

The complexity required to deliver safe, accurate, and actionable conversational analytics is immense. Attempting to build this stack in-house diverts critical resources away from your actual business differentiation.

You don’t have to reinvent the wheel. By partnering with a solution provider that has decades of experience in analytics and data management, you inherit a platform that is already secure, governed, and scalable.

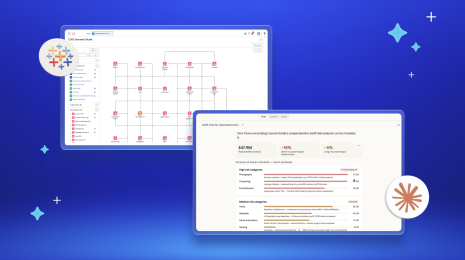

This is why we built Tableau Next. With true conversational analytics, trusted and relevant insights flow ambiently and reliably to every employee. It solves the "iceberg" problem out of the box, combining semantic truth, proactive insights, and seamless actionability.

Now with simplified per-user, per-month pricing, it's easier to adopt conversational analytics at scale. All analytical queries, data transforms, and AI usage are unmetered. Tableau Next is also available as a standalone offer—no bundle required.

Ready to accelerate your journey? Discover how Tableau Next, with Tableau Semantics, delivers true conversational analytics today.